Intel is betting that future data-center operations will depend on increasingly powerful servers running ASIC-based, programable CPUs, and its wager rides on the development of infrastructure processing units (IPU), which are Intel’s programmable networking devices designed to reduce overhead and free up performance for CPUs.

Intel is among a growing number of vendors—including Nvidia, AWS and AMD—working to build smartNICs and DPUs to support software-defined cloud, compute, networking, storage and security services designed for rapid deployment in edge, colocation, or service-provider networks.

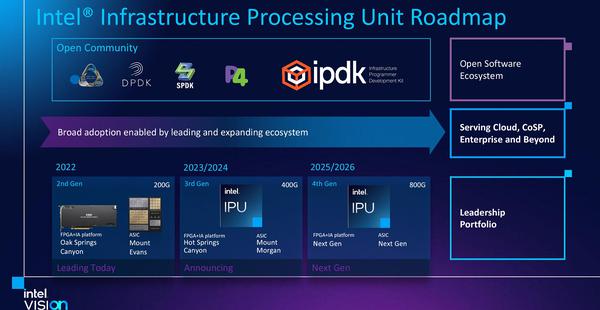

Intel’s initial IPU combines a Xeon CPU and FPGA but ultimately will morph into a powerful ASIC that can be customized and controlled with open system-based Infrastructure Programmer Development Kit (IPDK) software. IPDK runs on Linux and uses programming tools such as SPDK, DPDK and P4 for developers to control network and storage virtualization as well as workload provisioning.

At is inaugural Intel Vision event this week in Texas, Intel talked about other new chips and how AI will play in the data center. It laid out a roadmap for its IPU development and detailed why the device portfolio will be an important part of its data-center plans.

Specific to its IPU roadmap, Intel said it will deliver two 200Gb IPUs by the end of the year. One, code-named Mount Evans, was developed with Alphabet Inc.’s Google Cloud group and at this point will target high-end and hyperscaler data-center servers.

The ASIC-based Mount Evans IPU can support existing use cases such as vSwitch offload, firewalls, and virtual routing. It implements a hardware-accelerated NVM storage interface scaled up from Intel Optane technology to emulate NVMe devices.

The second IPU, code-named Oak Springs Canyon, is the vendor’s next generation FPGA that features a Xeon D processor and Intel Agilex FPGA to handle networking with custom programmable logic. It offers network virtualization function offload for workloads like open virtual switch (OVS) and storage functions such as NVMe over fabric.

Looking further ahead, Intel said a third-generation IPU code-named Mount Morgan and FPGA-based IPU code-named Hot Springs Canyon will be delivered in the 2023 or 2024 timeframe and will increase IPU throughput to 400Gb. In 2025 or 2026, Intel expects to deliver 800Gb IPUs.

One of the keys to Intel’s IPUs technology is the fast programmable packet-processing engine that all of the devices support. Whether it’s an FPGA or an ASIC-based offering, customers can program it using the P4 programming language, which has been around since 2013 and supports processes such as lookups, changing, modifying, encryption, and compression, according to Nick McKeown, senior vice president and general manager of the Network and Edge Group (NEX) at Intel. McKeon has founded a number of network startups including Barefoot Networks, which Intel acquired in 2019, and he won the 2021’s IEEE Alexander Graham Bell Medal for exceptional contributions to communications and networking sciences and engineering.

“Enterprise or cloud-based data centers can program servers and devices from the data center to the edge with packet-processing commonality that lets you control network congestion, encapsulation, routing and other features for controlling workloads,” McKeown said. “And we expect that technology to have a lot of application in firewalls, gateways, enterprise load balancing, storage offload, and more. We’re expecting IPUs to be extremely efficient compute devices for all of these kinds of network applications.”

“When we look back in a few years, I think we will find that enterprise data centers and hyperscalers will think of the network that interconnects CPUs and accelerators… as something that they program. They will think of it as IPUs that they program,” McKeown said.

The idea is to let enterprises run their infrastructures in the same way that today only a hyperscaler can afford, said Soni Jiandani, co-founder and chief business office for Pensando, in a recent Network World article. AMD recently purchased Pensando for $1.9 billion to gain access to the DPU-based architecture and technology Pensando develops. “There are a wide range of use cases such as 5G and IoT that need to support lots of low-latency traffic,” Jiandani said.

Security applications are also an emerging use case for IPUs, DPUs and smartNICs.

In virtual environments, putting functions like network-traffic encryption into smartNICs will be big, according to VMware. “In our case, we’ll also have the NSX firewall and full virtual SDN software or vSphere switch on the smartNIC that will let customers have a fully programmable, distributed security system,” said Paul Turner, vice president of product management with VMware, in an earlier interview about the emergence of smartNICs in the enterprise.

“In terms of zero encryption and fast processing, we can do line rate encryption with the IPU—200G today, 400G in the future—of the most popular encryption algorithms. Our customers then can program behaviors that were best suited for their environment, or they can just adopt standard encryption algorithms,” Intel’s McKeown said.

Join the Network World communities on Facebook and LinkedIn to comment on topics that are top of mind.