AMD is raising the bar in its battleagainst Intel in the data center with a new lineup of EPYC CPUs that use its 3D packaging technology to triple the L3 cache, giving them a significant hikein performance.

The Santa Clara, California-basedcompany is upping the ante in data centers with a new class of its EPYC CPUs, code-named Milan X, which uses its 3D V-Cache packaging technology. AMD said V-Cachestacks up to 512 MB of additional cache memory on top of the CPU, resulting ina more than 50% uplift in performance for workloads such as computational fluiddynamics, structural analysis, and electronic design automation.

Microsoft will also use Milan-X in a new offering from its Azure cloud computing service, the firms said.

AMD will beat Intel to the market with a server processor that uses 3Dchip packaging with plans to launch the Milan-X CPUs in the first quarter of 2022. Intel is banking on its advanced packaging prowess to help itregain its leadership in the data center and other areas, but it is not usingits 3D Foveros technology in the latest Sapphire Rapids server CPUs, whichinstead use Intel’s 2.5D chip packaging technology called EMIB.

AMD introduced Milan-X and several otherchips, including its latest server GPU to take on Nvidia, during its AcceleratedData Center event on Monday. It also revealed new details about its future “Genoa” serverCPUs.

AMD also said it landedMeta Platforms, the firm formerly known as Facebook, as a buyer for its EPYC CPUs,cementing its market share gains against Intel. For AMD, the win means that itsserver chips are designed into data centers by ten of the world’s largesthyperscale companies, including top US cloud computing firms—AWS, Microsoft,Google, IBM, Oracle—and their Chinese counterparts: Baidu, Alibaba, and Tencent.

For CEO Lisa Su, winningcustomers such as Microsoft and Meta has been a major part of her turnaround plan forAMD. As Intel's struggle to move to more advanced chip production hobbledits ability to compete in recent years, AMD has re-engineered itslineup of server processors, which can sell for up to thousands of dollars each. Ithas rolled out server chips that match or beat Intel’s Xeons on performancebenchmarks.

The Milan-X processors will have the same capabilities and features as EPYC 7003 serverchips introduced in March, code-named Milan, which come with up to 64 cores manufacturedon the 7-nm process by TSMC.

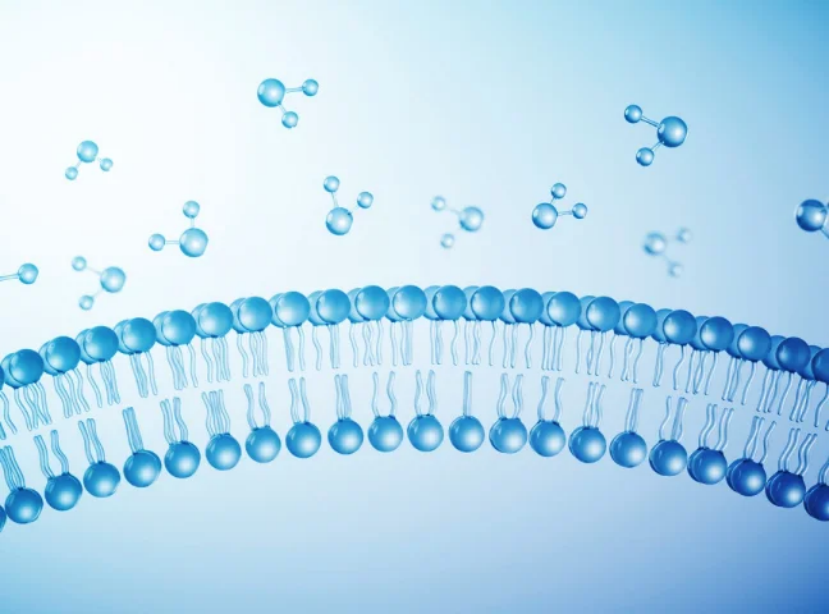

The “Zen 3”architecture at the heart of the EPYC CPUs brings improvements in clock speeds,latency, cache, and memory bandwidth. The processor is disaggregated into up toeight compute dies—also called chiplets or tiles—that contain up to eightcores each. The flagship processor features eight compute dies each with up to 32 MBof shared L3 cache directly on the die, for a total of 64 cores and 256 MB of L3cache. The L3 cache serves the chip’s central repository where data is storedfor fast, repeated access by the CPU cores.

The compute tilesare co-packaged with a central I/O tile based on the 14-nm node from GlobalFoundriesthat coordinates data traveling between the compute tiles surrounding it. TheI/O tile supports up to eight DDR4 channels clocked at up to 3.2 GHz and up to 128lanes of PCIe Gen 4. All the die are assembled with TSMC’s 2.5D chip packaging technologyon a substrate that resembles a very compact circuit board (PCB).

The new Milan-XCPUs will feature up to 64 cores, the same amount as the existing third-generationEPYC CPU lineup and they also will be “fully compatible” with existing EPYC serverplatforms with a BIOS upgrade.

AMD said V-Cache adds another 64 MB of SRAM on top of the 32 MB presenton every compute tile in the current third-generation EPYC CPUs, giving Milan-Xup to 96 MB of L3 cache per compute die. The V-Cache is manufactured by TSMC on7-nm and measures 6-mm by 6-mm. With a maximum of eight compute die as part ofMilan-X’s processor architecture, that translates into up to 768 MB of shared L3cache in the CPU.

“This additional L3 cache relieves memory bandwidth pressure andreduces latency and that in turn speeds up application performancedramatically,” Su said.

AMD said that opens the door for customers to buy dual-socketservers with more than 1.5 GB of L3 cache. When adding the L2 and L1 caches, theMilan-X processors will have a total of 804 MB of cache per socket.

AMD uses TSMC’s SoIC 3D packaging technology to place the memory ontop of the compute die with direct copper-to-copper bonding of the throughsilicon vias (TSVs) that connect the die, slashing the resistance of the interconnects.AMD said V-Cache works without the use of copper pads capped in solder called micro bumps,hiking power efficiency, interconnect density, and signaling routing, while limiting heat dissipation.

AMD said TSMC’s SoIC technology permanently bonds the interconnectsin V-Cache to the CPU, closing the distance between the die, resultingin 2 TB/s of communications bandwidth. As a result, the interconnects inthe Milan-X CPUs have up to three times the power efficiency by consuming one-thirdthe energy-per-bit and 200 times more interconnect density than the 2D chiplet packaging used by its third-generation EPYC CPUs.

AMD has previously unveiled plans to use V-Cache technology in itsRyzen CPUs for the PC market.

While the V-Cache is physically further from the CPU than the L3cache that runs through the middle of the compute tile like a spinal cord, AMDsaid the performance penalty is limited. The company said that it takes less latencyto travel through the interconnects and into the stacked die than it takes to leavethe CPU, travel through the I/O tile in the 2.5D package, access additional DRAMin the system, and then return to the CPU.

AMD said the 3D cache is a boon to a wide range of workloads indata centers, such as artificial intelligence, where it pays dividends to keepdata as close to the processor as possible. But where Milan-X stands out is in computationallyheavy workloads, such as modeling the structural integrity of a bridge, replicatingthe physics of an automotive test crash, and simulating air currents cascading aroundthe wing of an airplane.

Another workload AMD is trying to address with Milan-X CPUs is semiconductordesign, since the V-Cache guarantees “critical data” used in electronic designautomation (EDA) is located closer to the CPU’s cores.

It is impossible for even the most skilled engineers to test everysingle detail in a final chip design by hand. Chip firms run thousands of simulationsto verify the performance in chip designs before the final blueprints aremanufactured. To save time, they run simulations at the same time on separatecores in the same CPU. But because the cores are all fighting over limitedmemory cache and bandwidth, performance takes a hit.

But using V-Cache to upgrade the amount of shared L3 cache means AMD’sMilan-X CPUs can keep even more information close to the CPU cores, reducing latencythat can sap the performance of EDA workloads.

AMD said that a 16-core variant of Milan-X can do verificationruns on semiconductor designs in Synopsys’s VCS tool around 66% faster than a third-generation,16-core EPYC CPU without the new 3D V-Cache. Dan McNamara, seniorvice president and general manager of AMD’s server business, said Milan-X makesit possible for chip firms to test designs faster or run more tests at the sametime, reducing time-to-market.

Microsoft said cloud services based on Milan-X processors are alsoup to 50% faster for automobile crash test modeling and up to 80% higherperformance for aerospace workloads versus rivals’ cloud services.

AMD said it has partnered with many of the major players in EDAand other system design tools, including Altair, Ansys, Cadence, Siemens,Synopsys, among others, to improve how their software runs on Milan-X.

Top manufacturers of data center gear plan to roll out serverswith Milan-X inside, including HPE, Dell, Cisco, Supermicro, and Lenovo, amongothers. AMD said Milan-X chips will be available by the first quarter of 2022.