By Dr. Venkat Mattela, the Founder and CEO, Ceremorphic

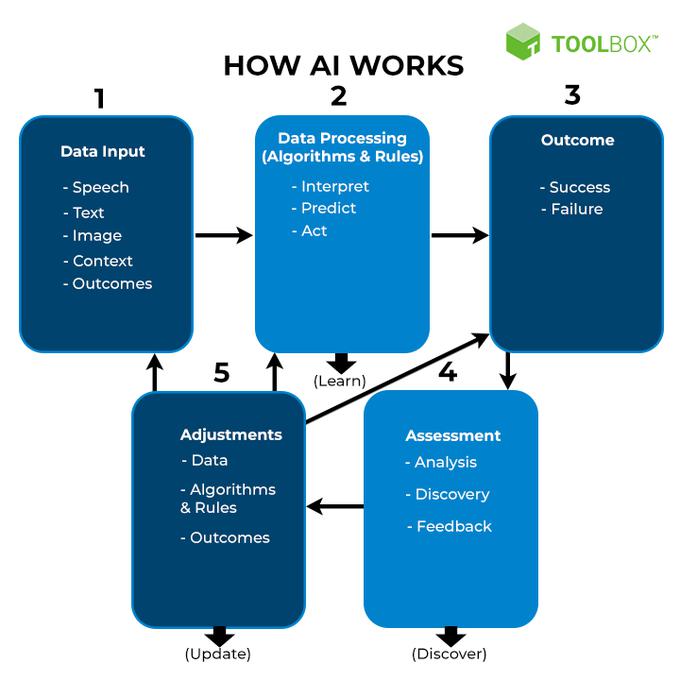

With the growth and wide-spread adoption of Artificial Intelligence (AI) and Machine Learning applications across all industrial segments, amidst the advent of all the technologies, supercomputers have made their mark. They are now used in almost every aspect of our lives, from drug development to weather prediction and online gaming. Supercomputers with their incredible intelligence may be capable of saving the world, but as their performance improves, their power requirements are approaching that of a small city. It’s no exaggeration to claim the semiconductor industry makes modern life possible. Over 100 billion integrated circuits see daily use internationally, and the demand only continues to grow: thanks to the large part of advancements in rapidly developing markets for artificial intelligence (AI), autonomous vehicles, and the Internet of Things.

Thus far, the industry has managed to consistently provide more powerful integrated chips in response to demand. But with the growing massive consumption of data each day, supercomputing environments are becoming more common in today’s everyday life and as a result their complex infrastructure, is thereby making troubleshooting and monitoring failures extremely difficult. This is because these infrastructures contain thousands of nodes that represent various applications and processors. To address these concerns, a real-time reliability analysis framework for Reliable-performance computing environments is required. There is an imminent need to address the issues of reliability, security, and energy consumption that are currently confronting high-performance computing.

For instance, during the pandemic, High-Performance Computing provided free access to the world’s most powerful computers for researchers battling COVID-19. Researchers and institutions used supercomputers to track the spread of Covid-19 in real-time and were also able to predict where the virus will spread by identifying patterns and examining how preventative measures such as social distancing was assisting. The amount of computing that was required at that global scale, was made possible only due to High Performance Computing, also popularly known as HPC. High-performance computing (HPC) enables companies to grow computationally to develop deep learning algorithms that can handle exponentially large amounts of data. However, with more data comes the demand for more computer power and higher performance specifications. And as a result, we are now witnessing HPC merging with Artificial Intelligence, thereby ushering in a new era!

Higher performance requires more energy, which is why it is unmanageable if we take the traditional approach to achieve higher performance. Developing advanced algorithms to reduce effective workload is a noval approach to energy consumption is one of the solutions. . However, in today’s new era where computation is abundant and Artificial Intelligence making security defence even more difficult, architecture needs to be designed to counter future threats and enable new AI / ML processing. Another high scaling opportunity that the semiconductor industry is moving towards is custom low power circuit architecture for high throughput short links. The industry today needs a new, dependable architecture that employs logic and machine learning to detect and rectify defects using the best hardware and software available.

It is commonly understood in traditional circuits that to make things faster, more energy must be used. Speed and power are constant adversaries. However, many of the strategies that help to reduce AI energy consumption also help to improve performance. For example, by keeping data local or stationary, you can save energy while avoiding the latency adders of data fetches. Sparsity minimizes the level of computation required, allowing you to finish workload faster. In simple terms – Less computing and fewer data movement mean less energy consumed. Machine Learning is also an alternate way of solving problems with much more energy efficiency (energy, low-cost performance, etc.).

In the current times, the semiconductor technology roadmap provides tools and nodes for the development of advanced silicon products, to address this massive demand of high-performance computing and the 5nm node is very effective for providing a high gate density (approximately 40M gates in 1 sq. mm area). With this, a company can essentially make very complex and high-performance chips in a reasonable physical size if it has access to this type of technology node. However, these nodes pose a challenge in terms of managing leakage power and reliability. And these two challenges, reliability and energy efficiency, currently stand in the way of making high performance a common place in the AI / Machine Learning space.

As scale and complexity have increased, new challenges in reliability and systemic resilience, energy efficiency, optimization, and software complexity have emerged, implying that current approaches should be re-evaluated. While there has been much excitement about the use of new technologies, both in computing hardware and cooling technologies, most users are still focused on making the most of the more traditional resources available to them.

Advertisement