In today’s digital age, artificial intelligence (AI) and machine learning (ML) are emerging everywhere: facial recognition algorithms, pandemic outbreak detection and mitigation, access to credit, and healthcare are just a few examples. But, do these technologies that mirror human intelligence and predict real-life outcomes build a consensus with human ethics? Can we create regulatory practices and new norms when it comes to AI? Beyond everything, how can we take out the best of AI and mitigate the potential ill effects? We are in hot pursuit of the answers.

AI/ML technologies come with their share of challenges. Globally leading brands such as Amazon, Apple, Google, and Facebook have been accused of bias in their AI algorithms. For instance, when Apple introduced Apple Card, its users noticed that women were offered smaller lines of credit than men. This bias seriously affected the global reputation of Apple.

In an extreme case with serious repercussions, U.S. judicial systems use AI algorithms to declare prison sentences and parole terms. Unfortunately, these AI systems are built on historically biased crime data, amplifying and perpetuating embedded biases in AI systems. Ultimately, this leads to questioning the fairness offered by the ML algorithms in the criminal justice system.

Table of Contents

The Fight for Ethical AI

Governments and corporations have been aggressively getting into AI development and adoption globally. Today, the availability of AI tools that even non-specialists can set up has been increasingly entering the market.

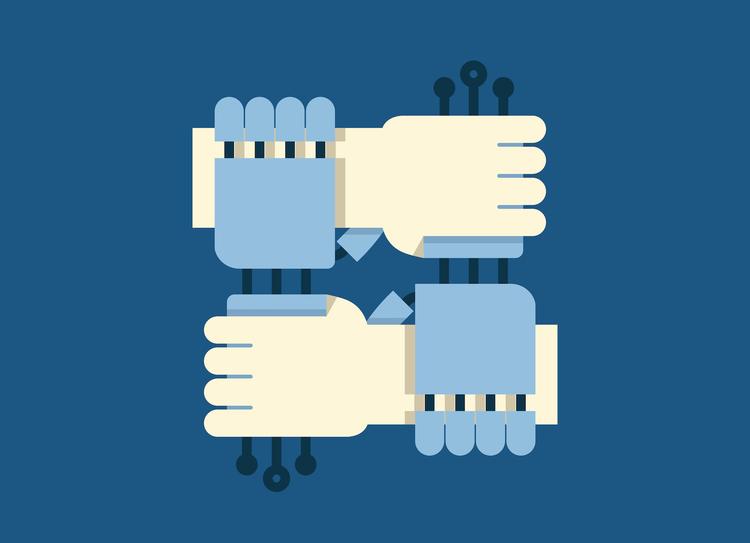

Amid this AI adoption and development spree, many experts and advocates worldwide have become skeptical about AI applications’ long-term impact and implications. They are concerned about how AI advancements will affect our productivity and the exercise of free will; in short, what it means to be “human.” The fight for ethical AI is nothing but fighting for a future in which technology can be used not to oppress but to uplift humans.

Global technology behemoths such as Google and IBM have researched and addressed these biases in their AI/ML algorithms. One of the solutions is to create documentation for the data used to train AI/ML systems.

After the issue of biases in AI systems, another most widely publicized concern is the lack of visibility over how AI algorithms arrive at a decision. It is also known as opaque algorithms or black box systems. The development of explainable AI mitigated the adverse impact of black box systems. While we have overcome some ethical AI challenges, several other issues like the weaponization of AI are yet to be solved.

There are many governmental, non-profit, and corporate organizations concerned with AI ethics and policy. For example, the Partnership on AI to Benefit People and Society, a non-profit organization established by Amazon, Google, Facebook, IBM, and Microsoft, formulates best practices on AI technologies, advances the public’s understanding, and serves as a platform for AI. Apple joined this organization in January 2017.

Today, there are many efforts by national and transnational governments and non-government organizations to ensure AI ethics.In the United States, for example, the Obama administration’s Roadmap for AI Policy of 2016 was a significant leap towards ethical AI, and in January 2020, the Trump Administration released a draft executive order on “Guidance for Regulation of Artificial Intelligence Applications.” The declaration emphasizes the need to invest in AI system development, boost public trust in AI, eliminate barriers to AI, and keep American AI technology competitive in the international market.

Moreover, the European Commission’s High-Level Expert Group on Artificial Intelligence published “Ethics Guidelines for Trustworthy Artificial Intelligence,” on April 8, 2019, and on February 19, 2020, the Robotics and Artificial Intelligence Innovation and Excellence unit of The European Commission published a white paper on excellence and trust in artificial intelligence innovation.

On the academic front, the University of Oxford accommodates three research institutes that focus mainly on AI ethics and promote AI ethics as a structured field of study and applications. The AI Now Institute at New York University (NYU) also researches the social implications of AI, focusing on bias and inclusion, labor and automation, liberties and rights, and civil infrastructure and safety.

Also read: AI Suffers from Bias—But It Doesn’t Have To

Some Key Worries and Hopes on Ethical AI Development

Worries

Hopes

Also read: Using Responsible AI to Push Digital Transformation

The Responsibility of Ethical AI

Tech giants like Microsoft and Google think governments should step in to regulate AI effectively. Laws are only as good as how they are enforced. So far, that responsibility has fallen onto the shoulders of private watchdogs and employees of tech companies who are daring enough to speak up. For instance, after months of protests by its employees, Google terminated its Project Maven, a military drone AI project.

We can choose the role we want AI to play in our lives and enterprises by asking tough questions and taking stern precautionary measures. As a result, many companies appoint AI ethicists to guide them through this new terrain.

We have a long way to go before artificial intelligence becomes one with ethics. But, until that day, we must self-police how we use AI technology.

Read next: Top 8 AI and ML Trends to Watch in 2022